|

I am a PhD student at the Language Technologies Institute at Carnegie Mellon University, working with Prof. Eric P. Xing. I also worked closely with Prof. Zhiting Hu. My research centers on world models and agent models as complementary foundations for general-purpose intelligence. World models are generative simulators of all actionable possibilities across physical, social, and conceptual domains, letting agents run thought experiments and reason about counterfactual futures. Agent models internalize agency (e.g., hierarchical goals, an evolving identity, world-model-based planning, self-regulation, and self-directed learning) rather than relying on externally defined structure, with the world model as the substrate enabling the rest. Applications include physcial AI (e.g., robots, video games) and language-based agents (e.g., interactive reasoning, computer/browser use). I completed my MS at CMU's Machine Learning Department. Before that, I worked as a data scientist in industry following an internship at Baidu Research, where I was advised by Prof. Hui Xiong. I earned my undergraduate degree from Columbia University, with a double major in Mathematics-Statistics and Computer Science. |

|

Earlier News

|

|

|

|

Eric Xing*, Mingkai Deng*, Jinyu Hou, Zhiting Hu Arxiv 2025 paper This position paper critiques several schools of thoughts on world modeling, and proposes a new architecture for a general-purpose world model, with an outlook of a Physical, Agentic, and Nested (PAN) AGI system enabled by such a model. |

|

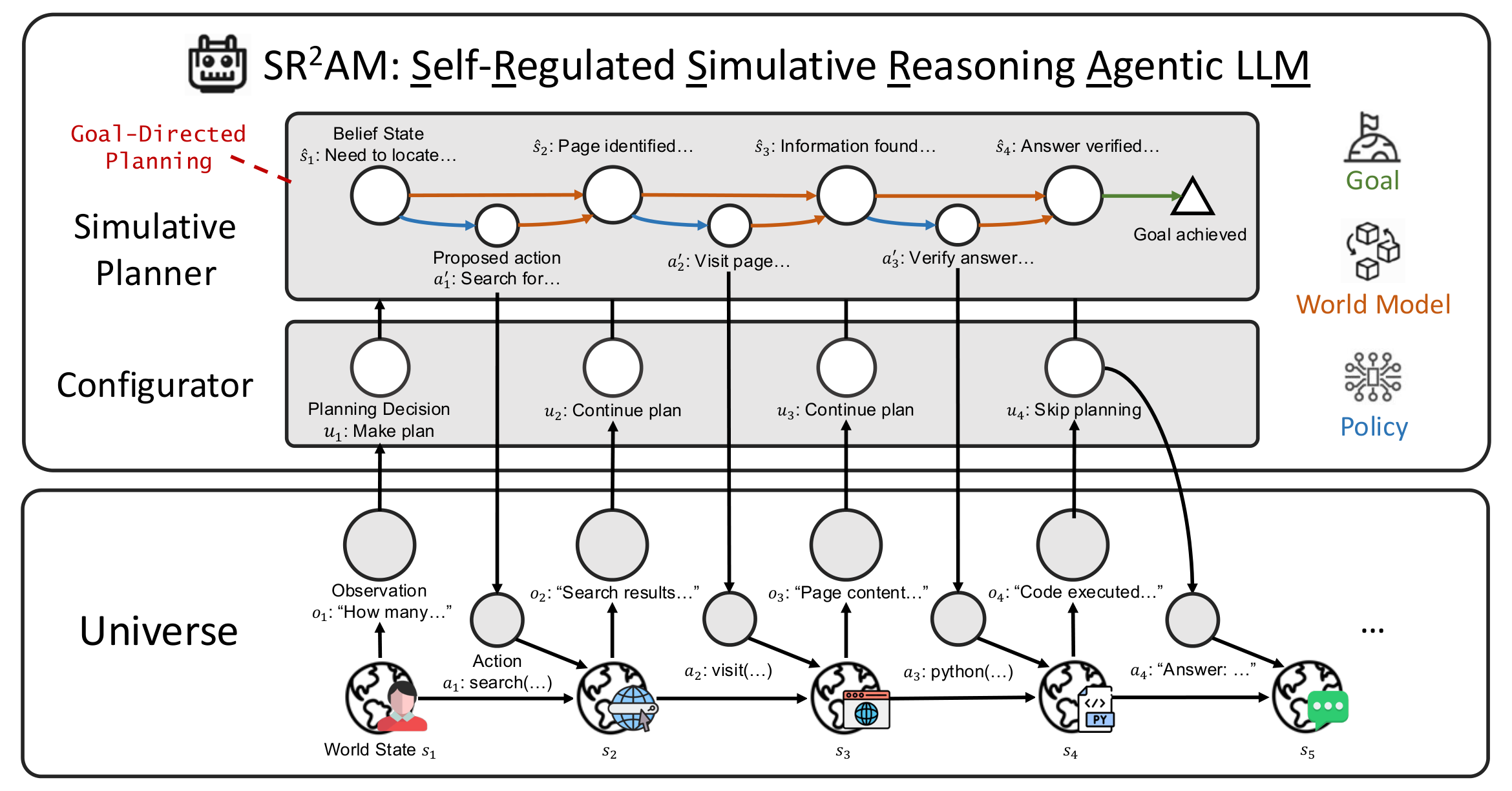

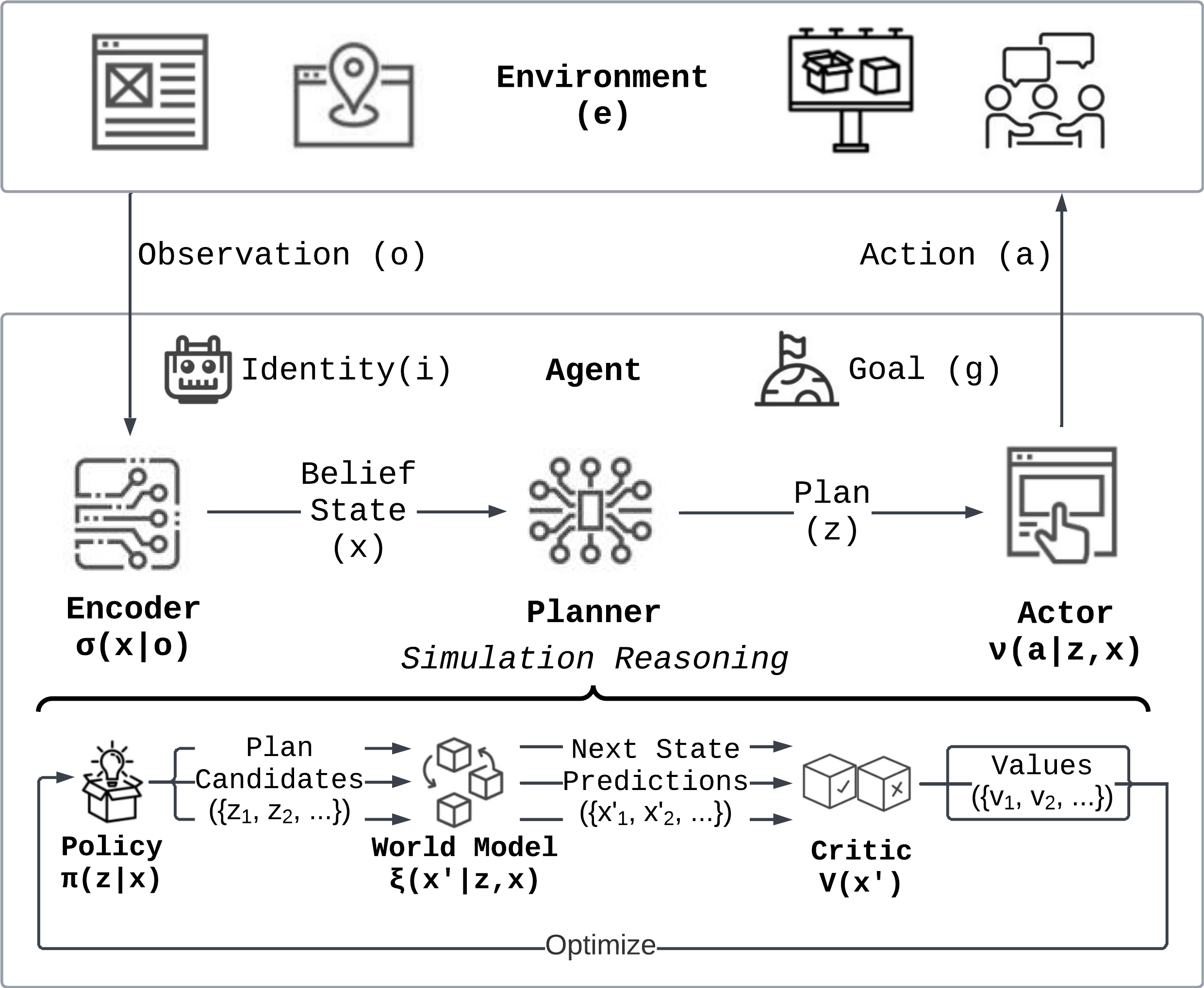

Mingkai Deng*, Jinyu Hou*, Lara Sá Neves*, Varad Pimpalkhute, Taylor W. Killian, Zhengzhong Liu, Eric P. Xing Arxiv 2026 website / paper / code / model (v0.1-8B) / model (v1.0-30B) A model of efficient agentic reasoning as simulative reasoning via a world model (System II), self-regulation of planning (System III), and reactive execution (System I). Resulting 30B model SR2AM-v1.0 competes with 685B–1T LLMs while using 25.8–95.3% fewer reasoning tokens than comparable agentic LLMs. |

|

Mingkai Deng*, Jinyu Hou*, Zhiting Hu, Eric P. Xing Berkeley LLM Agents Hackathon (2nd Place, Winner of Fundamental Track) website / paper / code A general reasoning paradigm for agentic planning via world model (System II), rather than just a reactive policy (System I). Resulting agent architecture (SiRA) leads to 124% higher success rate than reactive baseline. Success rate on constrained navigation: 0% → 32.2% vs a representative open-web agent. |

|

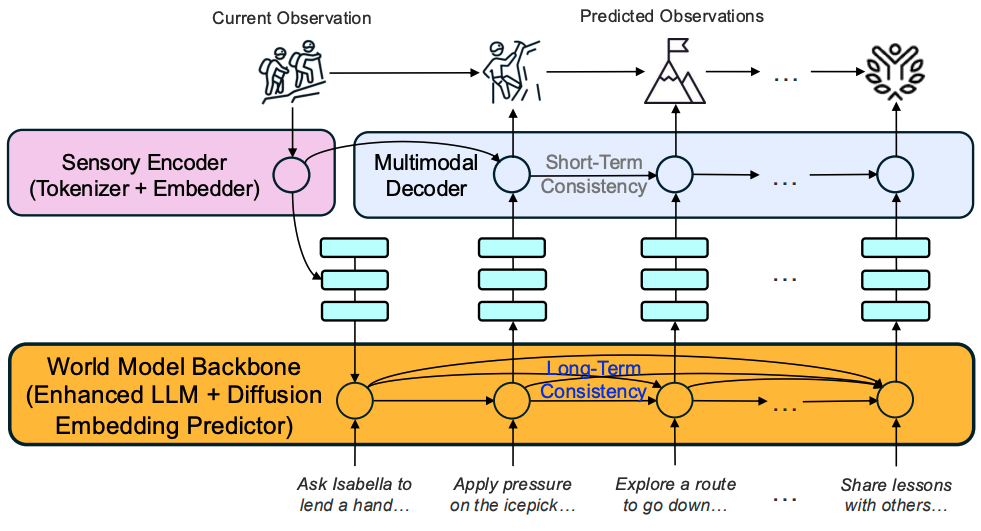

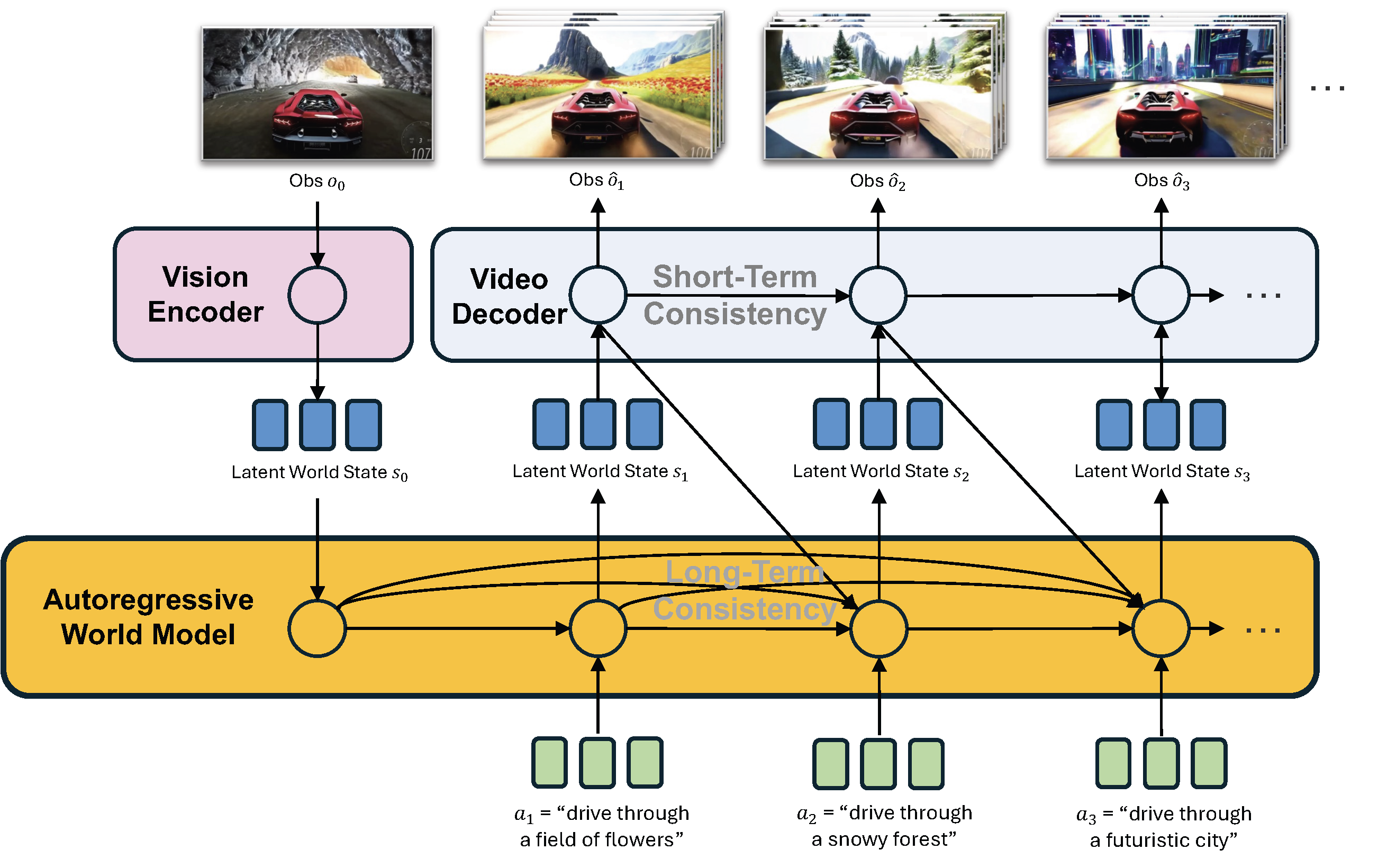

PAN Team, Institute of Foundation Models: Jiannan Xiang, Yi Gu, Zihan Liu, Zeyu Feng, Qiyue Gao, Yiyan Hu, Benhao Huang, Guangyi Liu, Yichi Yang, Kun Zhou, Davit Abrahamyan, Arif Ahmad, Ganesh Bannur, Junrong Chen, Kimi Chen, Mingkai Deng, Ruobing Han, Xinqi Huang, Haoqiang Kang, Zheqi Liu, Enze Ma, Hector Ren, Yashowardhan Shinde, Rohan Shingre, Ramsundar Tanikella, Kaiming Tao, Dequan Yang, Xinle Yu, Cong Zeng, Binglin Zhou, Zhengzhong Liu, Zhiting Hu, Eric P. Xing Arxiv 2025 website / paper A general world model that predicts future world states through high-quality, action-conditioned video simulation. Built on the Generative Latent Prediction (GLP) architecture that combines autoregressive latent dynamics with language grounding, PAN excels at long-horizon forecasting and simulative reasoning. |

|

|

Zhengzhong Liu*, Bowen Tan*, Hongyi Wang*, Willie Neiswanger, Tianhua Tao, Haonan Li, Fajri Koto, Yuqi Wang, Suqi Sun, Omkar Pangarkar, Richard Fan, Yi Gu, Victor Miller, Liqun Ma, Liping Tang, Nikhil Ranjan, Yonghao Zhuang, Guowei He, Renxi Wang, Mingkai Deng, Robin Algayres, Yuanzhi Li, Zhiqiang Shen, Preslav Nakov, Eric Xing Arxiv 2025 paper / code / data / base model / chat model A 65-billion parameter, 360-degree open-source LLM that surpasses LLaMA-65B and rivals LLaMA2-70B, while requiring fewer FLOPs and tokens. |

|

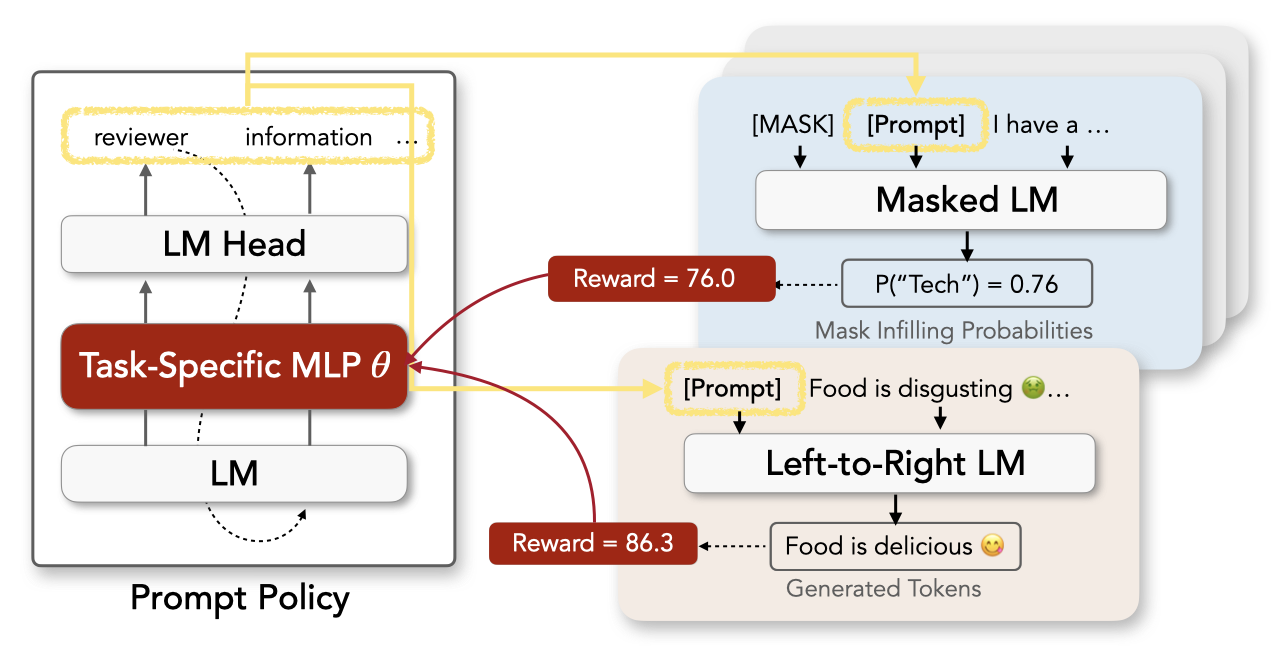

Mingkai Deng*, Jianyu Wang*, Cheng-Ping Hsieh*, Yihan Wang, Han Guo, Tianmin Shu, Meng Song, Eric P. Xing, Zhiting Hu EMNLP 2022 paper / code An efficient and flexible framework for using RL to optimize prompts of discrete text that enable pre-trained LMs (e.g., BERT, GPT-2) to perform diverse NLP tasks. Experiments on few-shot classification and unsupervised text style transfer show superior performance to a wide range of existing methods. |

|

|

|

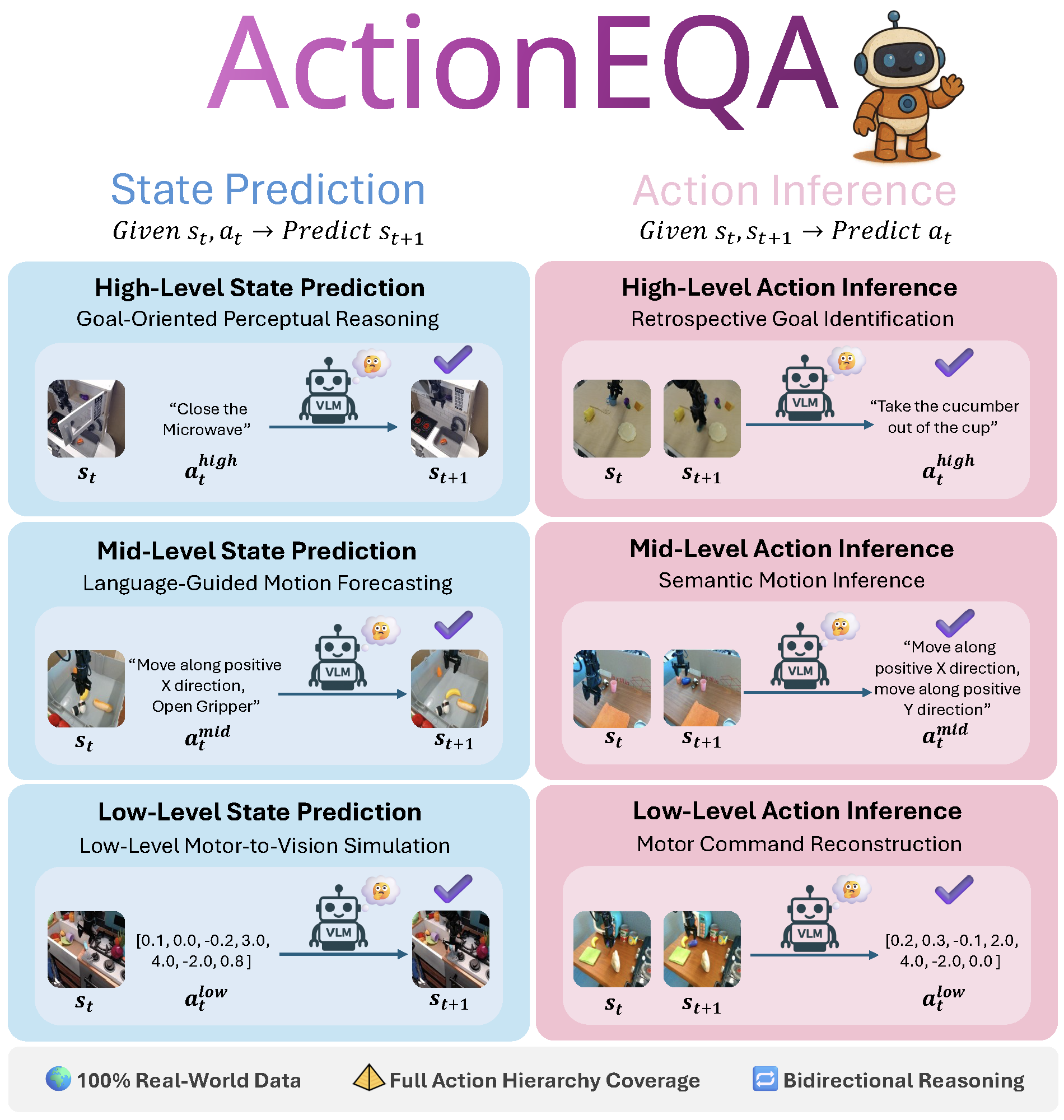

Tianwei Bao*, Qineng Wang*, Kangrui Wang, Mingkai Deng, Guangyi Liu, Jiayuan Mao, Larry Birnbaum, Zhiting Hu, Eric P. Xing, Zhaoran Wang, Manling Li Transactions on Machine Learning Research (TMLR) 2026 paper The first Embodied Question Answering benchmark that methodically evaluates how well Vision-Language Models bridge the semantic–physical gap—translating high-level instructions into low-level physical actions. ActionEQA reveals a large gap between VLM and human performance (58.4% vs. 95.6%). |

|

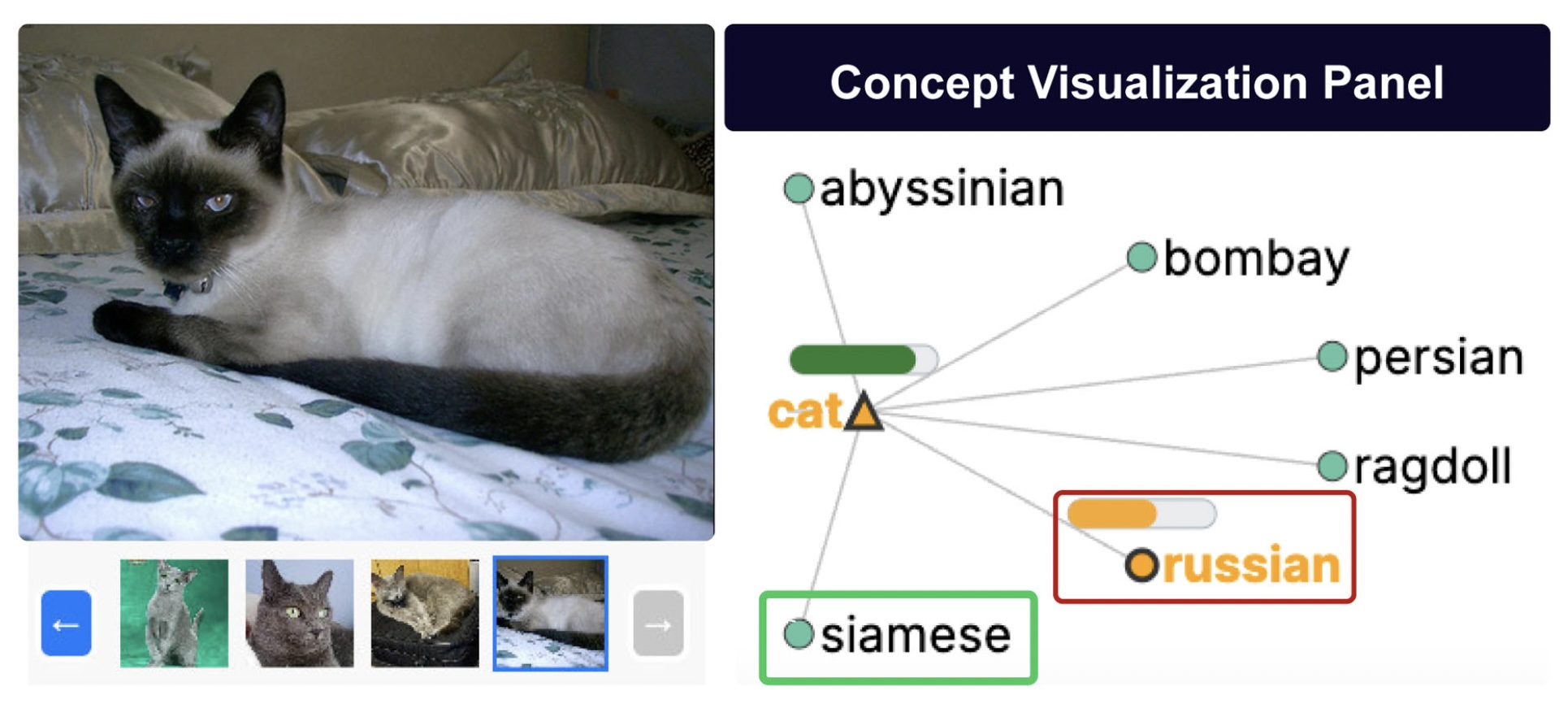

Reza Shahriari, Yichi Yang, Danish Nisar Ahmed Tamboli, Michael Perez, Yuheng Zha, Jinyu Hou, Mingkai Deng, Eric D. Ragan, Jaime Ruiz, Daisy Zhe Wang, Zhiting Hu, Eric Xing IEEE Computer Graphics & Applications 2025 paper A multimodal conversational system that blends natural language and visual interaction for debugging hierarchical object-classification models, letting users express high-level debugging goals while adaptive explanations surface only the most task-relevant visual or textual details. |

|

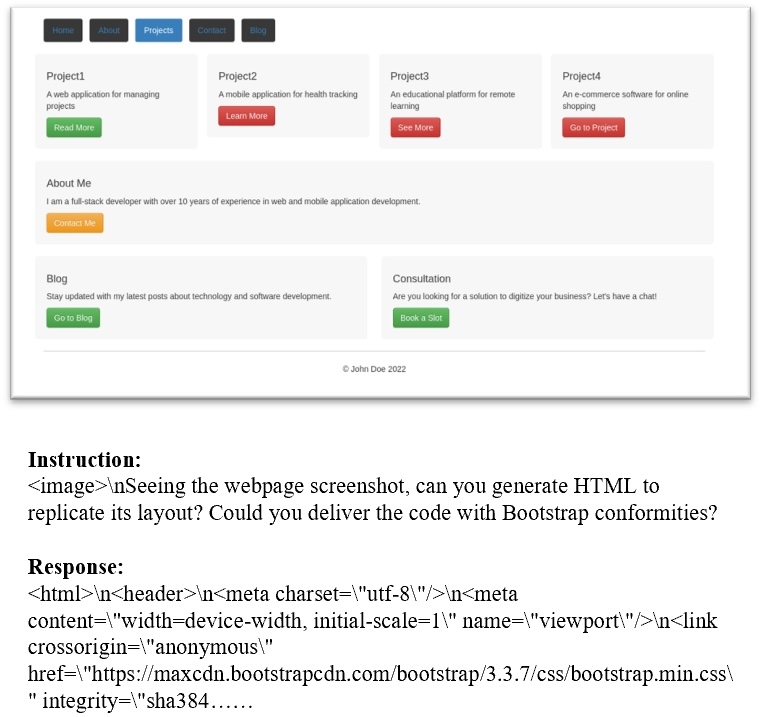

Sukmin Yun*, Haokun Lin*, Rusiru Thushara*, Mohammad Qazim Bhat*, Yongxin Wang*, Zutao Jiang, Mingkai Deng, Jinhong Wang, Tianhua Tao, Junbo Li, Haonan Li, Preslav Nakov, Timothy Baldwin, Zhengzhong Liu, Eric P. Xing, Xiaodan Liang, Zhiqiang Shen NeurIPS 2024, Datasets and Benchmarks website / paper / code / data A large-scale webpage-to-code dataset which enhances the webpage understanding and HTML code translation abilities of MLLMs without compromising general visual capabilities. |

|

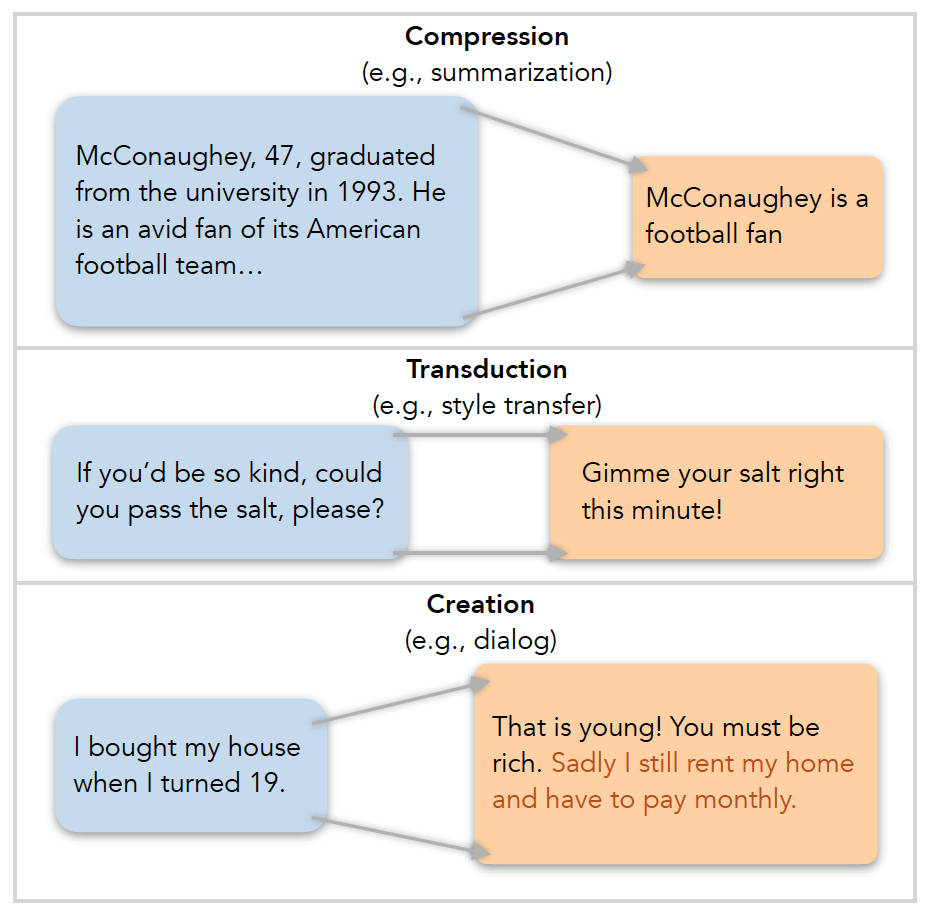

Mingkai Deng*, Bowen Tan*, Zhengzhong Liu, Eric P. Xing, Zhiting Hu EMNLP 2021 paper / slides / blog / code / open-source library A general framework that helps solve the difficulty of evaluating natural language generation (NLG) with a single unified operation. Inspired evaluation metrics improve over SOTA metrics for diverse NLG tasks. Our metrics are available as library on PyPI and GitHub. |

|

|

|

(Alphabetical Order) Brandon Chiou, Mason Choey, Mingkai Deng, Jinyu Hou, Jackie Wang, Ariel Wu, Frank Xu, Zhiting Hu, Hongxia Jin, Li Erran Li, Graham Neubig, Yilin Shen, Eric P. Xing Maitrix.org website / code / demo An open-source web agent that operates in a Chromium-based browser and can perform a broad range of realistic tasks that require complex, long-range, and goal-achieving behavior, such as searching for flights, compiling online shopping options, and researching news coverage. |

|

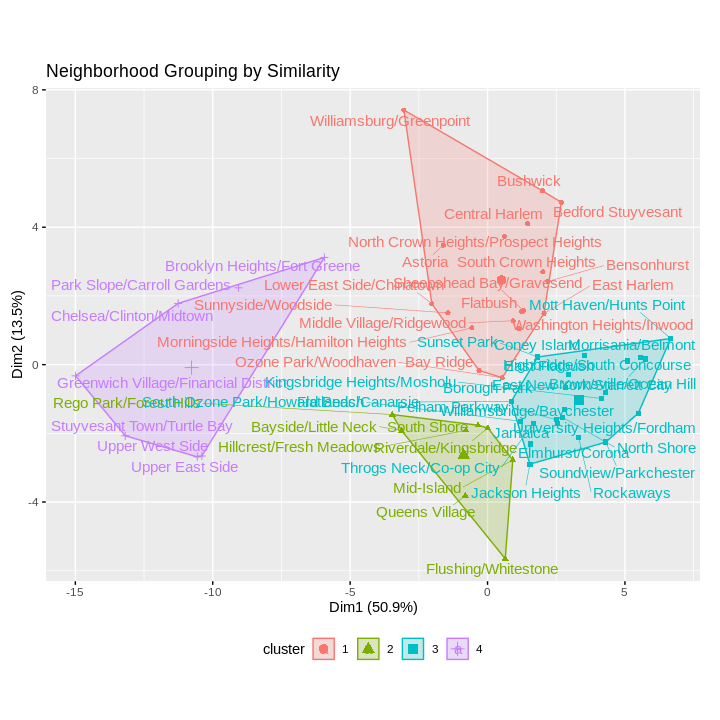

Mingkai Deng, Jerry Shi, Yvonne Zhou ASA DataFest 2018 (Best Insights Award) slides / code A data-driven narrative of NYC gentrification patterns over time and their second-order impact on the city's inhabitants. Construct significant leading predictors using resources from NYC OpenData. |

|

|

|

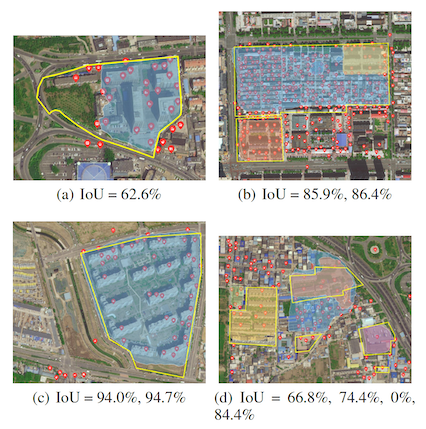

Mingkai Deng*, Guanglei Du*, Xinjiang Lu, Jingbo Zhou, Jing Sun, Yiming Zhang Preprint 2019 paper Combine structured point-of-interest (POI, e.g., parking lots and buildings) data with unstructured satellite image data to automatically identify and draw the boundaries of urban areas-of-interest (e.g., residential areas and campuses). |

|

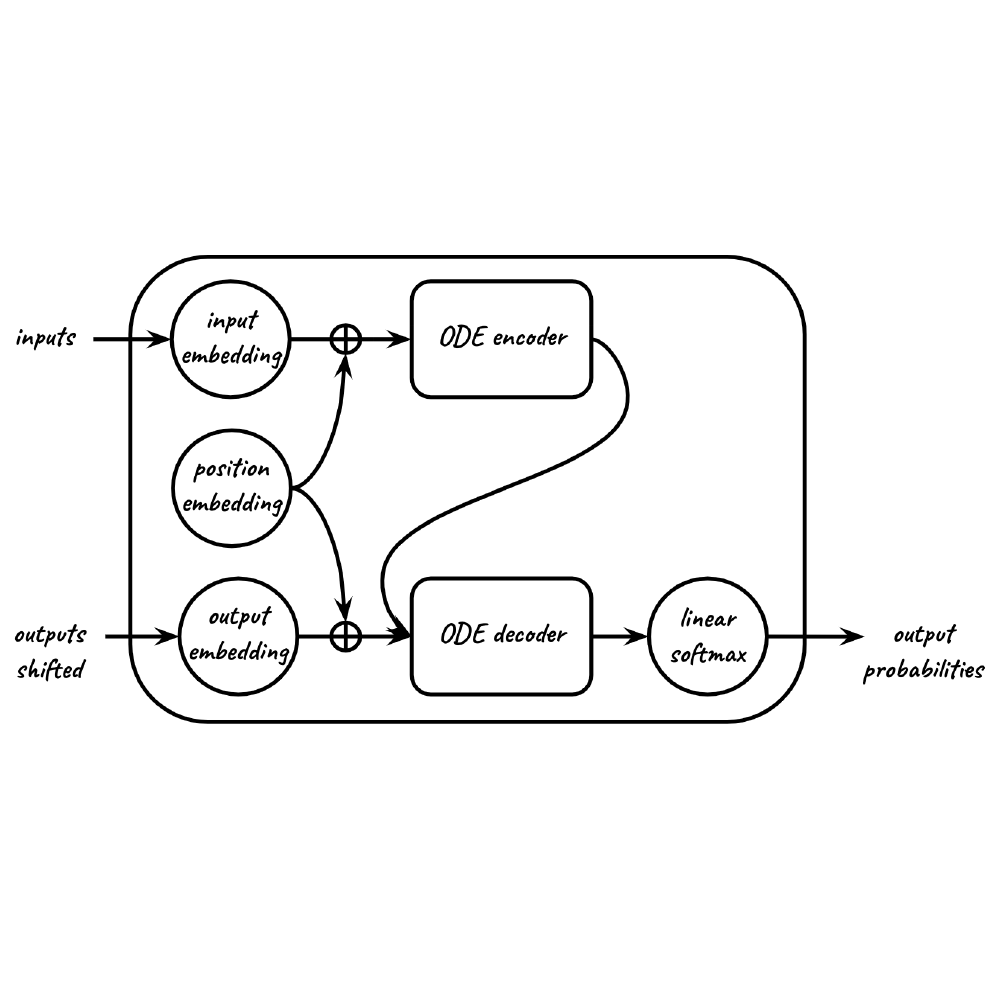

Mingkai Deng, Biqing Qiu, Yanda Chen, Iddo Drori Preprint 2019 paper / code Formulate the Transformer model as an ordinary differential equation (ODE) and perform forward-backward operations using an ODE solver. The resulting continuous-depth model has fewer parameters, converges faster, and shows promising performance in empirical experiments. |

|

The source code of this website is adapted from here |